AI Models Are Getting Cheaper Fast: What It Means for You

Here’s the article:

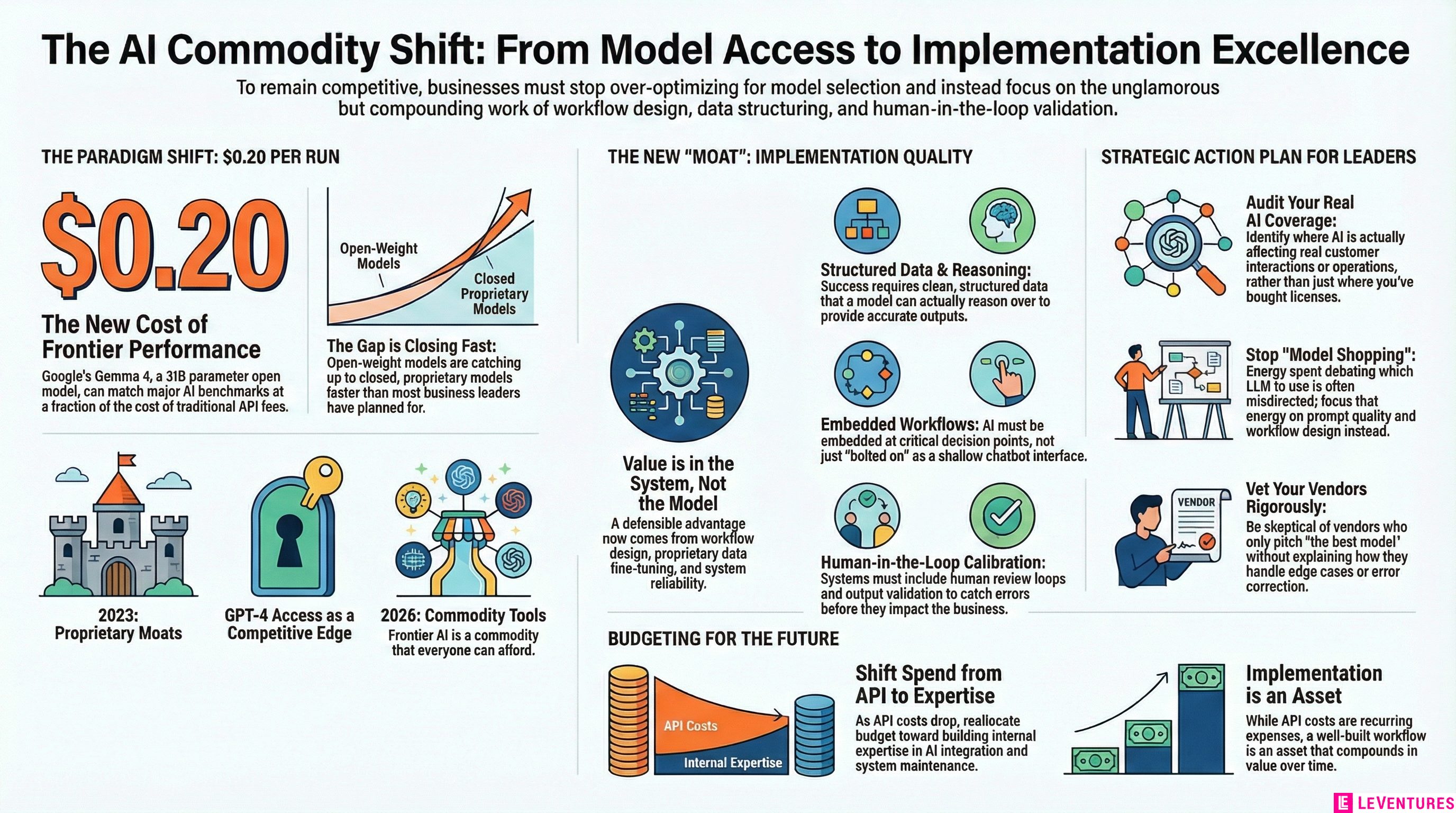

Google just released Gemma 4, a 31-billion parameter open model that outperformed nearly every major AI on standard benchmarks. The cost to run it? Around $0.20 per run.

That number should change how you think about your AI strategy.

Not because Gemma 4 is the best model ever built. It isn’t. But because it signals something more important: the gap between “affordable AI” and “frontier AI” is closing fast, and it’s closing faster than most business leaders have planned for.

What just happened

Gemma 4 is an open-weight model. That means anyone can download it, host it, and run it without paying per-token API fees to a big provider. At its benchmark performance level, it competes directly with models that cost significantly more to access.

A few months ago, getting GPT-4-class results meant paying GPT-4 prices. That’s no longer true. You can now get results that match or beat many commercial models at a fraction of the cost, often on infrastructure you control.

This is not a one-time event. It’s a trend. Open models have been catching up to closed ones for over a year. Gemma 4 is just the latest data point that the pace of that catch-up is accelerating.

What this means if you’re evaluating AI vendors right now

If you’re currently shopping for AI tools or renegotiating contracts with AI vendors, this is useful leverage. Vendors who charge premium prices for access to their proprietary model are now competing against open alternatives that are nearly as good, or in some cases better on the tasks that matter to your business.

Ask vendors directly: what are you paying for beyond the model itself? If the honest answer is “the model,” that’s a weak value proposition in 2026. The defensible value in AI products is now in the workflow design, the integrations, the fine-tuning on your data, and the reliability of the system. Not raw model quality.

That said, don’t just go rip out your current stack to chase cheaper. Switching costs are real. The question isn’t “is there a cheaper model?” The question is “what would a cheaper model actually save me, and what would it cost to switch?”

Do that math before you move.

The real shift: from model access to implementation quality

Here’s the thing that most businesses are missing about this moment.

When AI was expensive and access was limited, having a good AI tool was itself a competitive advantage. If you were using GPT-4 in 2023 and your competitor wasn’t, you had an edge just from the access.

That era is ending.

When frontier-level AI capability costs $0.20 a run, access is no longer the moat. Everyone will have access to good AI. The question becomes: what are you doing with it?

The businesses that win over the next two years won’t be the ones who picked the smartest model. They’ll be the ones who built the smartest system around whatever model they’re using.

That means having:

- Clean, structured data that the model can actually reason over

- Workflows where AI is embedded at the right decision points, not bolted on as an afterthought

- Human review loops calibrated to the actual risk level of each task

- Output validation so you know when the model is wrong before it costs you

This is not glamorous work. It’s operational, detail-oriented, and specific to how your business actually runs. But it’s the work that compounds.

Three things you should do now

Audit where AI is actually touching your workflows. Not where you’ve bought licenses. Not where someone did a demo. Where is AI generating outputs that affect real decisions, customer interactions, or operations? Most businesses have less coverage than they think, and the coverage they do have is often shallow.

Identify your highest-leverage gaps. There are almost certainly places in your business where a well-implemented AI workflow would save significant time or reduce errors. Customer inquiry handling, internal knowledge retrieval, content review, data extraction from unstructured sources. Pick the one that would have the biggest operational impact and treat it like a real project, not an experiment.

Stop optimizing for model selection. If you have someone on your team spending significant time evaluating which LLM to use, that’s probably misdirected energy right now. Model quality at a usable threshold is abundant and getting cheaper. Spend that time on the quality of your prompts, the structure of your data, and the design of the workflow instead.

The vendors you should be skeptical of

Any AI vendor whose pitch is primarily “we use the best model” is selling you something that’s depreciating fast.

Any vendor who can’t clearly explain what happens when the model is wrong, and how their system catches and corrects it, is selling you a demo, not a production system.

Any vendor who can’t show you actual workflow integration, not just a chatbot interface bolted onto your website, is not solving your actual problem.

The AI market is noisy right now because capability costs are dropping and everyone is scrambling to stay relevant. That’s creating a lot of product repositioning and rebranding. Look past the messaging and ask what the system actually does when something goes wrong.

What commodity AI means for your budget

Good news first: if you’re spending significant money on AI API costs right now, that number should come down over the next 12-18 months as open models continue to improve and competition between providers intensifies.

The money that used to go toward “access to smart AI” can now go toward “building smart systems around AI.” That’s a better investment. API costs are recurring and scale with usage. A well-built workflow is an asset that compounds.

The harder news: building those systems still requires real expertise. Knowing how to wire AI into an actual business process, handle edge cases, validate outputs, and iterate based on results is not something that commoditizes the same way model weights do. That skill gap is where the variance in AI ROI comes from.

Companies that invest in that expertise now, either building it internally or working with people who have it, will have a meaningful advantage as the models themselves become interchangeable.

The bottom line

Gemma 4 at $0.20 a run is a signal, not just a product launch. It tells you that the AI landscape is shifting from “who has access to good AI” to “who knows how to use it well.”

If your AI strategy is primarily about which models you’ve licensed, you’re optimizing for something that’s becoming a commodity. If your strategy is about how deeply and how well AI is embedded in your actual operations, you’re building something durable.

The businesses that figure out the implementation side of this will have compounding advantages that are much harder to copy than a model subscription.

If you’re not sure where your AI implementation actually stands, Le Ventures offers a free audit to help you see where the gaps are and what’s worth fixing first. No pitch, just an honest look at what you have and what you’re missing.