Your AI Agent Is Stuck Waiting for You

You built the agent. You connected it to your CRM, your email, your support queue. It can read, reason, and respond. Then you set it up to flag every decision for human review before taking action.

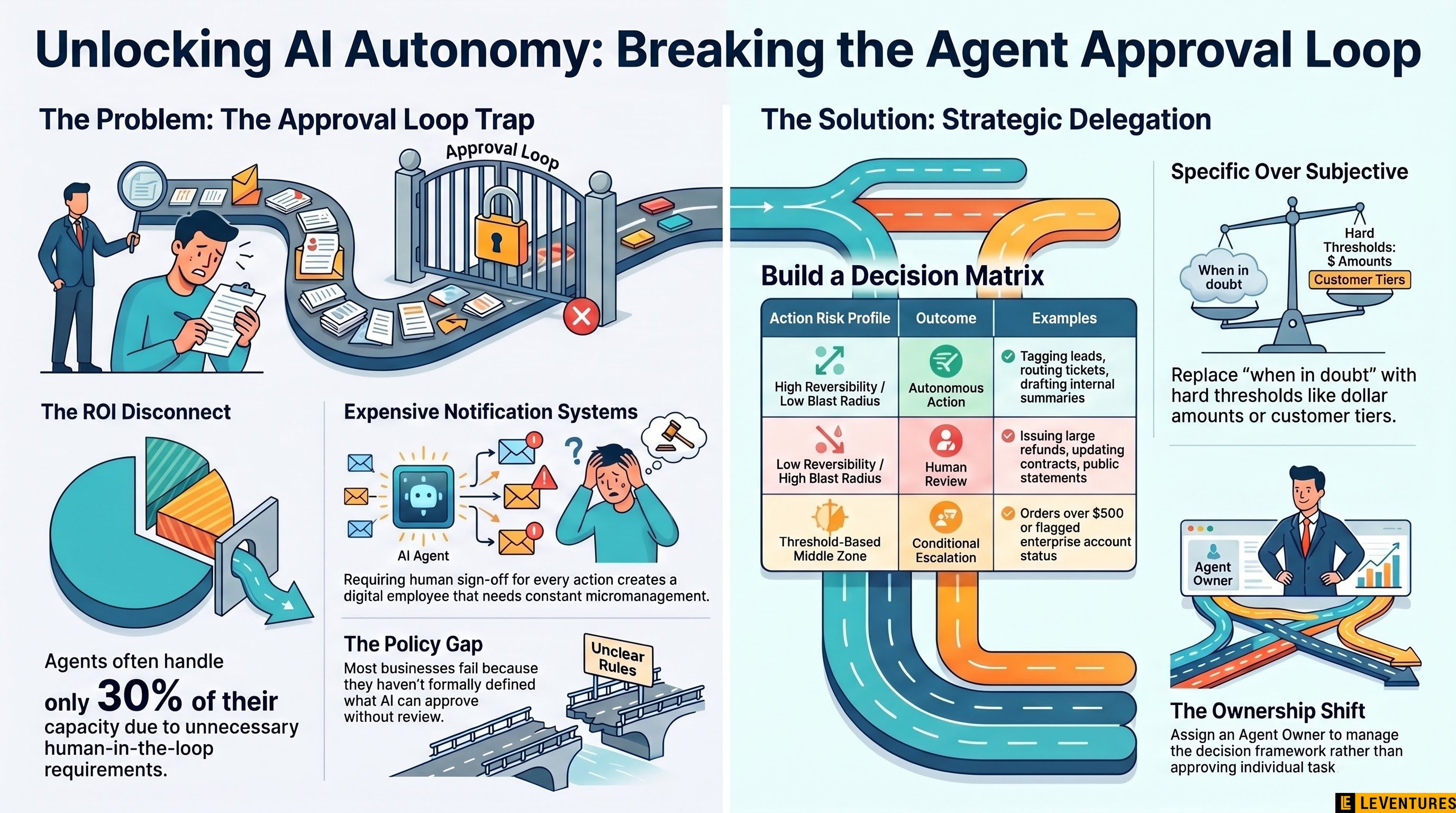

Congratulations. You just built a very expensive notification system.

The approval loop problem

This is where most AI agent deployments stall. The technology works. The agent can identify the right action 90% of the time. But the org hasn’t decided what the agent is actually allowed to do on its own, so it asks. You confirm. It acts. Repeat a hundred times a day.

That’s not automation. That’s a new employee who needs a manager’s sign-off to send an email.

The frustrating part is this isn’t an AI problem. It’s a delegation problem, and most businesses have never had to solve it explicitly before. When you hire a human, they come with judgment, context, and professional norms. You don’t write down every decision they’re allowed to make. You trust that they’ll figure out the edges.

AI agents don’t get that benefit of the doubt. And if you don’t define their authority clearly, the safe default is to ask.

Why operators skip this step

Structuring decision authority sounds simple until you try to do it. You have to answer questions your org has never formally addressed:

- What dollar amount can this agent approve without review?

- Can it send external communications without a human seeing them first?

- What triggers escalation versus autonomous action?

- Who owns the decision when the agent gets it wrong?

Most teams skip this because it requires getting legal, ops, and leadership in a room to make actual policy decisions. That’s harder than setting up the API connection. So they deploy the agent with a conservative approval loop as a placeholder, and the placeholder never gets revisited.

Six months later, the agent is handling 30% of what it could theoretically handle, and the team wonders why ROI is disappointing.

How to structure it instead

Start with a decision matrix, not a permission list. For every action your agent might take, map two things: the reversibility of the action and the blast radius if it goes wrong.

Low reversibility plus high blast radius - agent asks every time. Sending a public statement, processing a refund over a threshold, updating a customer’s contract terms.

High reversibility plus low blast radius - agent acts autonomously. Tagging a lead, drafting an internal summary, routing a ticket to the right queue, sending a templated follow-up.

The middle zone is where you build your actual policy. Define the conditions under which the agent escalates, and make them specific. “When in doubt” is not a condition. “When the order value exceeds $500 or the customer account is flagged as enterprise” is a condition.

Once you have the matrix, test it against two weeks of historical decisions. You’ll find your agents should have been autonomous on 60-70% of what they’re currently escalating. That’s your immediate efficiency gain.

The ownership question nobody asks

There’s a harder conversation underneath all of this: who is accountable when an autonomous agent makes a bad call?

Most orgs avoid this question by keeping humans in the loop for everything. That’s not accountability, that’s liability diffusion. It also means you never get the real benefit of autonomous operation.

The answer is to assign an agent owner, just like you’d assign a process owner. This person defines the decision authority, reviews error patterns, adjusts policy, and owns the outcomes. They’re not approving every decision. They’re responsible for whether the decision framework is right.

That accountability structure is what makes it safe to give the agent real authority. Without it, everyone’s too nervous to let go.

The actual implementation work

If you’re evaluating AI agent platforms right now, you’re probably comparing features, latency, accuracy rates, and integration options. Those matter. But the harder variable is how clearly your org can define what the agent is authorized to do.

The teams getting real throughput from agents in 2026 aren’t the ones with the best models. They’re the ones who did the decision design work that most operators skip.

If you want a second set of eyes on where your agent setup is bottlenecked, Le Ventures offers a free audit that often surfaces these gaps in the first conversation.