Stop Building AI Nobody Asked For

The Graveyard Is Already Full

Retailers are spending real money on AI right now. Chatbots, product recommendation engines, virtual try-on tools, AI-powered search, personalized landing pages. The press releases are flowing.

The customers are not.

Modern Retail has been tracking this closely, and the pattern is clear: most retail AI projects get built, launched, quietly ignored, and eventually shelved. The investment gets written off. The team moves on to the next thing.

This is not a technology problem. The technology works. The problem is how businesses are deciding what to build.

The Wrong Question Is Costing You Six Months

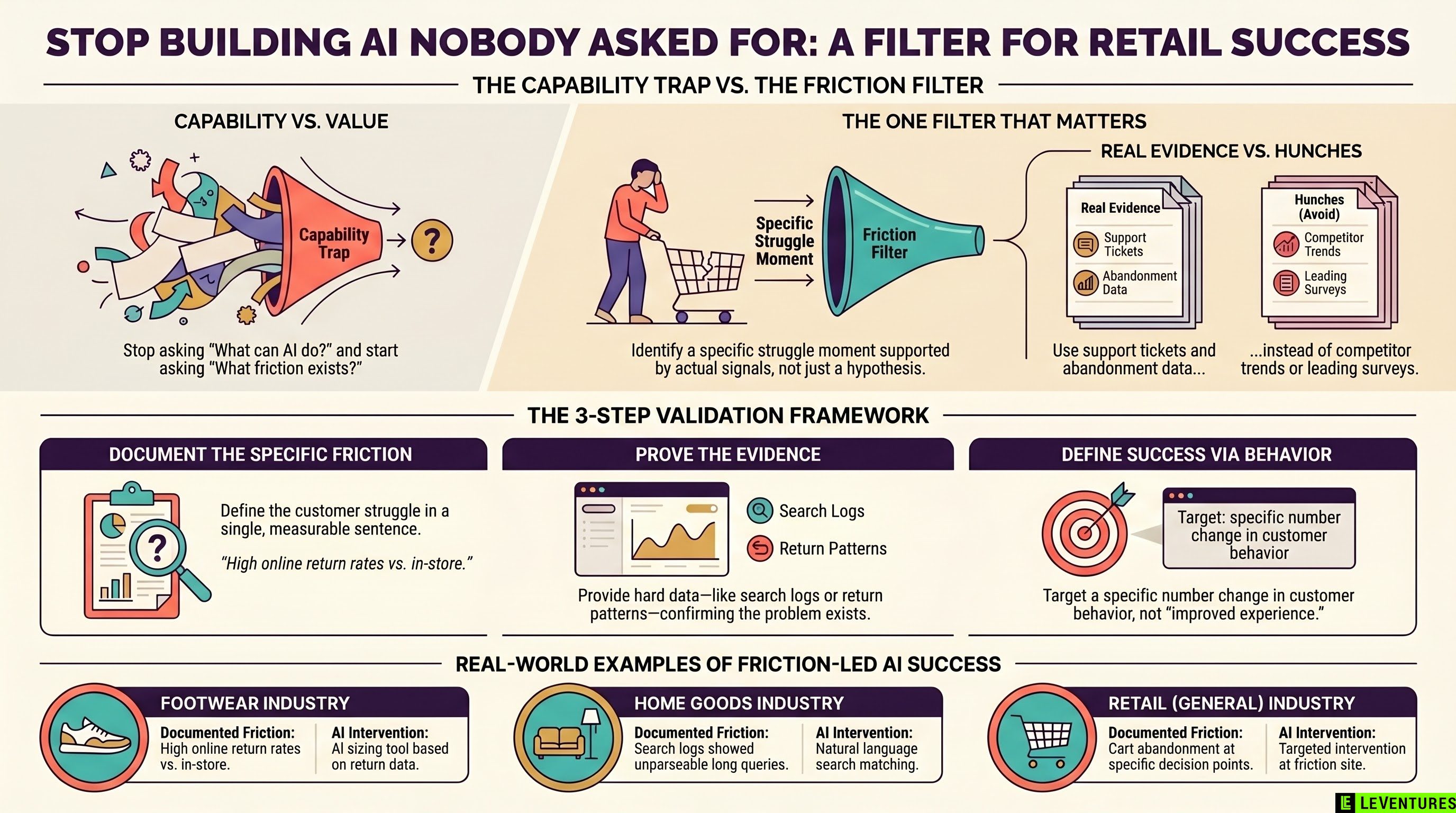

When most companies kick off an AI initiative, the first question in the room is some version of: “What can AI do for us?”

That question sounds reasonable. It is actually a trap.

It focuses your team on capability - what is technically possible - instead of value - what would actually change customer behavior. So you end up building things like an AI stylist chatbot because AI can do conversational styling recommendations, not because your customers told you they were stuck on outfit decisions.

The result is a product your engineering team is proud of and your customers never open.

The retailers getting traction are asking a different question first.

The One Filter That Changes Everything

Before any AI project gets approved, ask this:

What specific friction does my customer hit today, and how do I know it exists?

That is it. One question. But it has teeth.

Notice what it requires. You need to name a specific moment where customers struggle, not a general category like “personalization” or “discovery.” And you need evidence - not a hypothesis, not a competitor’s case study, not something your product manager read in a newsletter. Actual signals from your actual customers.

If your team cannot answer that question with specifics, the project should not get funded. Not yet.

This sounds harsh. It is meant to be. The alternative is a six-month build cycle, a launch, three months of low engagement data, and a post-mortem where everyone agrees the timing was off and the market wasn’t ready. The market was ready. The product just didn’t solve anything.

What Evidence Actually Looks Like

Here is what counts as evidence that a friction point exists:

- Support ticket volume around a specific problem (“customers keep asking which size to order”)

- Cart abandonment data tied to a specific page or decision point

- Search queries that return poor results repeatedly

- Return rate patterns linked to a specific product category

- NPS comments that cluster around the same complaint

Here is what does not count:

- “We think customers want more personalization”

- “Competitors are doing this”

- “AI can do X, and X seems useful”

- A survey where you asked leading questions

The difference matters because evidence points you toward a specific intervention. A hunch points you toward a demo.

What the Retailers Getting Traction Have in Common

A few retailers are actually seeing AI move the needle. They are not uniformly the biggest players with the biggest budgets. They share a different characteristic: they started with a documented problem.

One footwear brand noticed that their return rate on online orders was significantly higher than in-store. The friction was clear - customers couldn’t tell if something would fit from photos alone. They built an AI sizing recommendation tool trained on their specific return data and product dimensions. Returns dropped. That’s a measurable outcome tied to a documented problem.

A home goods retailer saw in their search logs that customers were typing long, descriptive queries that their existing search couldn’t parse - things like “light wood coffee table that fits under a low sectional.” They used AI to improve search result matching for natural language queries. Conversion on search improved. Again, documented problem, specific intervention, measurable result.

Neither of these projects started with “what can AI do?” They started with “why are customers leaving without buying, and where exactly does that happen?”

The Org Problem Behind the Wrong Question

Here is something worth naming directly: the “what can AI do?” question is not just a strategic error. It is often a political one.

AI budgets are large right now. Executives want to show AI progress. That pressure flows down and produces a race to build things that look impressive in a board presentation - a chatbot with a name, a flashy personalization feature, a virtual try-on tool with a polished UI.

The problem is that board presentations reward demos. Customers reward solutions to actual problems.

If your AI roadmap is being driven more by what will look good in a quarterly review than by what will reduce a specific friction your customers have, you are building for the wrong audience.

This is a conversation worth having explicitly in your planning cycle. Before Q2 or Q3 budgets get locked, someone needs to ask: for each of these projects, what is the customer problem we have documented evidence of, and what does success look like in terms of customer behavior change?

If that question makes people uncomfortable, that is useful information.

A Practical Process for Q2 and Q3 Planning

If you are heading into a planning cycle and want to apply this filter, here is a simple process:

List every proposed AI project. For each one, require the proposing team to document three things in writing before the project gets a budget line:

- The specific friction point - one sentence, specific enough to be measurable

- The evidence that friction exists - the actual data, not the hypothesis

- The behavioral outcome that would indicate success - not “improved experience” but a number that moves

If all three can be answered clearly, the project is worth evaluating. If any of them produces vague answers, send it back. The team needs to do customer and data work before they come back with a proposal.

This process does two things. It stops bad projects before they start. And it makes the good projects stronger, because the team doing the build has a clear definition of success from day one.

The AI Wave Is Not Slowing Down

The pressure to ship AI is going to increase through the rest of this year, not decrease. That means the graveyard of abandoned projects is going to get bigger before it gets smaller.

The businesses that come out ahead will not be the ones who built the most. They will be the ones who built the right things for documented reasons and could measure whether it worked.

One question before every project. That is the whole filter.

At Le Ventures, we work with operations and marketing leaders to figure out which AI projects are worth building and which ones will waste your next two quarters. If you want a clear-eyed look at your current AI roadmap before budgets lock, reach out for a free audit. We will tell you what we actually think.