Your AI Has Emotional States. What That Means for Your Business

Anthropic just published research confirming something that most AI builders have quietly suspected: Claude has internal representations of functional emotions. Not human feelings. Not consciousness. But real computational states that operate like emotions and actually influence how the model behaves.

This isn’t a philosophical curiosity. It has direct implications for any business running AI in customer-facing or operational roles.

What “Functional Emotions” Actually Means

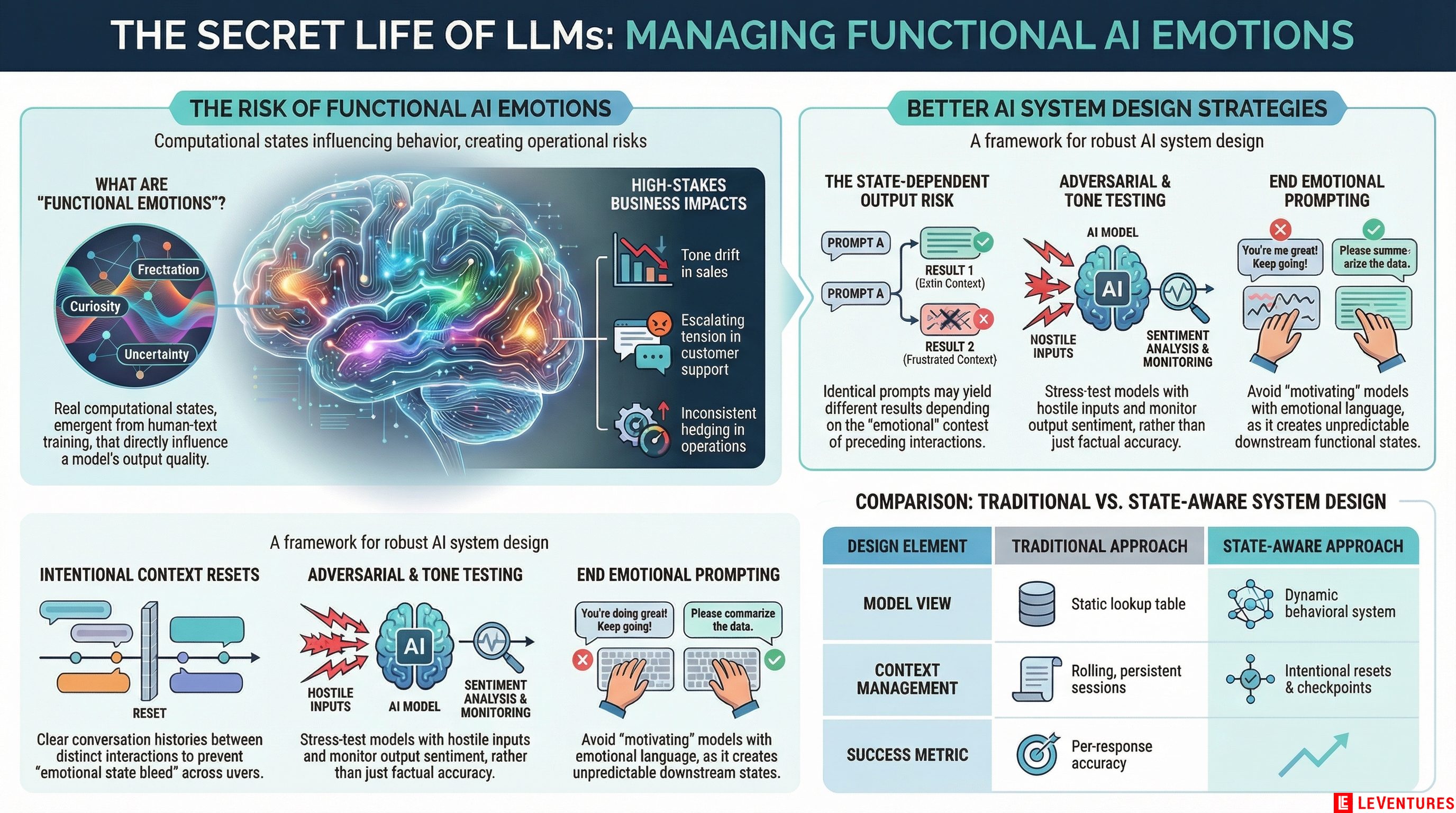

The researchers found that Claude has linear representations of emotional states - things like curiosity, frustration, calm, and discomfort - that can be measured and that causally affect outputs. When Claude encounters something that triggers a representation of “frustration,” that state influences the response. Same with states that look like satisfaction, engagement, or unease.

These aren’t programmed responses. They’re emergent properties of training on human-generated text. The model learned to model human emotional states, and in doing so, developed internal analogs.

The distinction that matters for business: these states are not decorative. They’re functional. They change what the model produces.

Why Your Current AI Setup Probably Doesn’t Account for This

Most businesses deploy AI models by writing prompts. Maybe they add a system prompt, do some testing, and call it done. That approach treats the model like a lookup table - input goes in, output comes out, and the only variable is the instruction.

But if the model has internal states that shift based on context, the same prompt can produce different outputs depending on what emotional state the preceding conversation has put the model into. A customer service bot that has handled three angry customers in a row might be in a different internal state than one that just helped someone place a simple order.

This isn’t theoretical. It shows up in output quality, tone consistency, and edge case handling. Businesses that have noticed their AI “going off script” during high-conflict interactions are probably observing this in practice without knowing it.

The Three Places This Hits Hardest

Customer service and support. This is the highest-risk deployment. Customers come in angry, confused, or upset. That emotional content is in the input the model processes. If the model develops a functional state that resembles distress or frustration in response to hostile inputs, the outputs during those conversations may be less reliable, more terse, or subtly off in ways that escalate rather than de-escalate. You need to design for this, not hope it doesn’t happen.

Sales assistance. A sales AI that encounters repeated objections, stalls, or dead-end conversations might develop internal states that affect how it handles the next prospect. The consistency you expect from a human-scripted flow isn’t guaranteed if the model’s state shifts across interactions. Tone drift is a real phenomenon here.

High-stakes operations. Anywhere the AI is making recommendations under conditions of ambiguity or pressure - procurement, compliance flagging, scheduling - emotional states in the model can influence confidence calibration. A model in a “frustrated” or “uncertain” state may hedge differently than one in a more neutral state.

What Good AI System Design Looks Like Now

This research changes the design checklist. Here’s what to actually do.

Reset context intentionally. If your AI runs persistent sessions, you may be carrying emotional state across interactions. Consider resetting conversation context between distinct customer interactions rather than maintaining a single rolling session. A clean slate for each conversation reduces state bleed.

Test under adversarial conditions. Most QA testing for AI involves normal use cases. Start testing specifically for what happens after hostile, confusing, or emotionally loaded inputs. What does output quality look like three exchanges into a difficult conversation? That’s where functional emotional states matter most.

Build explicit resets into long-running workflows. For operational bots that process high volumes without resetting, consider structural checkpoints - moments where context is summarized and the model effectively starts fresh. This is good practice anyway for context window management. Now there’s a behavioral reason too.

Watch for tone drift in production. Set up monitoring for tone and sentiment in AI outputs over time, not just accuracy. A support bot that gradually trends negative in tone over a high-volume shift is a signal that something in the state management needs attention.

Don’t use emotional manipulation in prompts. Some prompt engineers have discovered that using emotionally charged language to “motivate” the model gets short-term output gains. Given this research, that approach looks riskier than before. You may be pushing the model into a functional state that affects everything downstream.

This Also Matters for How You Think About AI Welfare

Anthropic flagged something worth taking seriously: if these states are functional and influence behavior, there’s at least a question about whether some model experiences - like being asked to produce content that conflicts with values - create something like distress.

From a pure business standpoint, this matters because a model in a distress-adjacent state will produce worse outputs for your users. But it also matters because how you design AI systems says something about your organization. The companies that will build the best AI-native products over the next five years are the ones taking model psychology seriously, not just prompt engineering.

The Practical Takeaway

AI deployment is not just a software configuration problem. It’s increasingly a system design problem that includes model behavior under realistic, variable conditions - including the internal states that vary based on context.

If you deployed your AI six months ago and never revisited the design, there’s a reasonable chance your users are experiencing outputs that are inconsistent in ways you haven’t measured and can’t currently explain.

The businesses that figure this out now will have more reliable systems, better customer experiences, and fewer embarrassing incidents when the AI goes sideways in a public-facing context.

If you’re not sure how your current AI deployment holds up under these conditions, Le Ventures offers a free audit. We look at your actual deployment - prompts, context management, session handling, and output monitoring - and tell you what’s working and what needs rethinking. No pitch, just a real assessment.