Your AI Coding Assistant Is Holding You Back.

The Permission Trap Nobody Talks About

Agentic AI is not coming. It is already here, already running in production, already generating real revenue for the companies that figured out how to use it.

Cloudflare launched their AI gateway and agent orchestration tools. Salesforce is running agents that close support tickets without a human in the loop. Startups are deploying AI that handles entire workflows, not just single prompts. The infrastructure layer for autonomous AI is being built right now, fast.

And yet, if you open Claude or Codex or Gemini and try to build something, you spend half your time clicking “approve” on every little action.

That tension is worth talking about.

Agentic AI at the Infrastructure Level vs. What You Actually Get

At the infrastructure level, the vision is clear. An agent receives a goal, breaks it into tasks, executes them, handles errors, loops back, and delivers a result. No human babysitting required. That is the whole point.

Cloudflare’s Workers AI, AWS Bedrock Agents, Google’s Vertex AI pipelines - these platforms are being designed with the assumption that AI will run long tasks autonomously. Durable execution, state management across steps, error recovery. The plumbing is getting built.

Then you go back to your coding assistant and it asks permission to create a file.

There is a real gap between what the infrastructure is being built to support and what the actual development experience looks like today for most people building with AI.

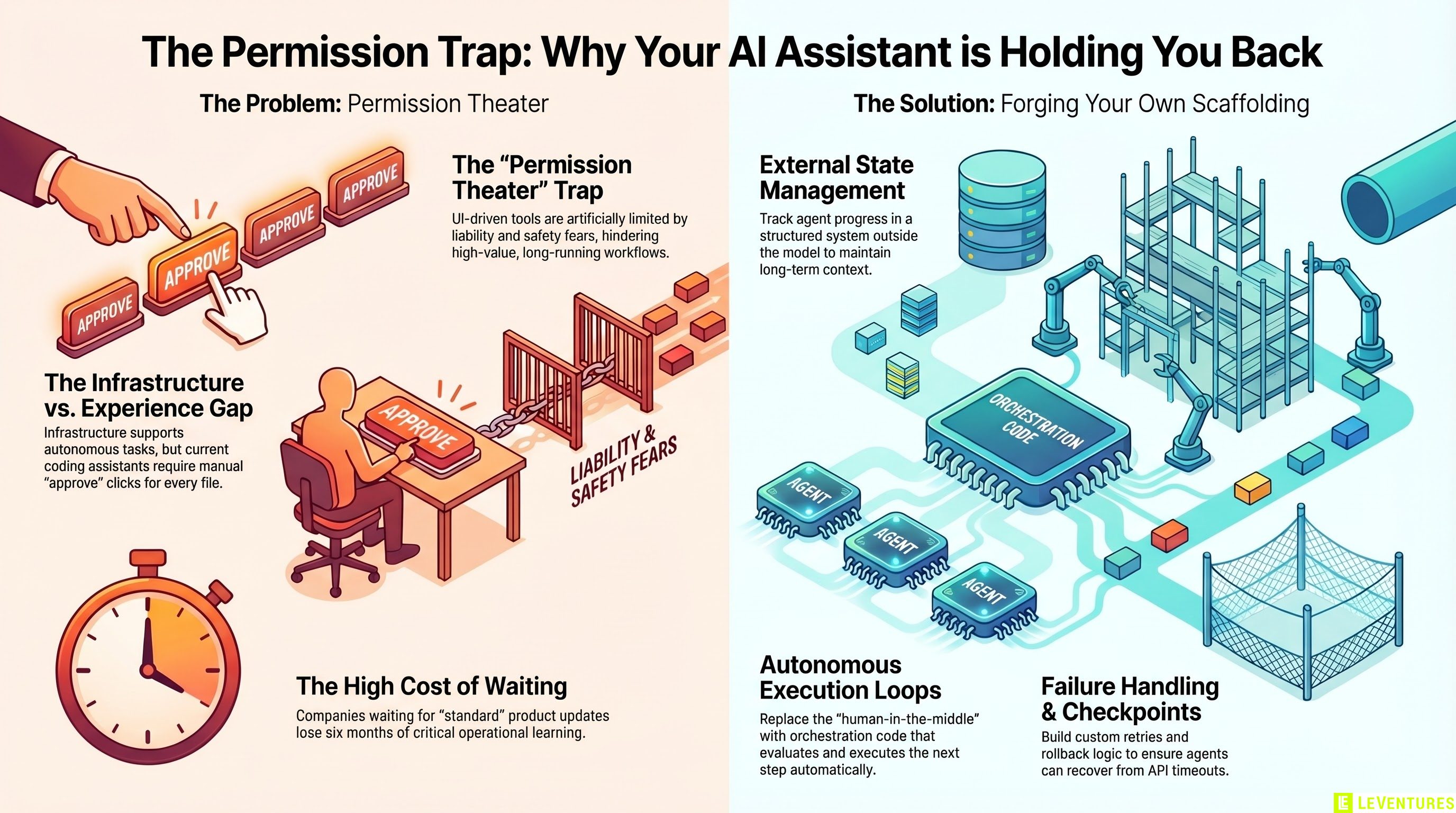

The Permission Theater Problem

Claude has a “bypass permissions” mode. In theory, it lets the agent run without stopping to confirm every action. In practice, it bypasses some confirmations and still stops for others. The behavior is inconsistent. It is not truly autonomous.

Codex and Gemini have similar patterns. They will run several steps, then pause and ask you to confirm something, then run a few more, then pause again. You end up sitting there watching a progress bar and clicking “yes” over and over.

This is not a knock on the tools. There are real reasons for it - liability, safety, user trust. But let us call it what it is: permission theater. The tools are technically capable of more autonomy than they are allowed to demonstrate.

What ends up happening is that people use these tools for small tasks where the interruptions do not matter much. The big, long-running workflows that would generate the most value? Those stay in the “someday” pile.

The Experimentation Gap

Here is the part that should bother you if you are trying to stay ahead.

The long-running AI test cases exist. Anthropic, OpenAI, Google - they all have internal demos and research showing agents that run for hours, manage complex multi-step workflows, and complete substantial tasks without human intervention. Some of these have been written up, presented at conferences, shared in research papers.

But as a developer or business owner trying to build with these tools today, you cannot just flip a switch and access that functionality. You get the consumer version, which is the cautious version, which is the version where the company has decided the general public is not ready to have AI running loose in their systems.

That is a reasonable call from a risk management perspective. It is a frustrating call from the perspective of someone trying to build something real.

By the time these features become standard and accessible to everyone, the companies that figured out how to build the underlying scaffolding themselves will already have six months of operational data, refined processes, and working systems. The others will be starting from scratch with a shinier UI.

What Forging Your Own Actually Looks Like

If you want to work with long-running AI agents before the tools hand them to you, you have to build the scaffolding yourself. That means a few things.

First, you need state management outside the model. The model itself does not remember what it did three steps ago unless you tell it. Build a system that tracks state, logs each step, and passes context forward. This can be as simple as a structured JSON file or as complex as a database, depending on your use case.

Second, you need an execution loop that does not depend on a human in the middle. That means writing the orchestration code yourself - something that takes a task, runs a prompt, evaluates the output, decides the next step, and continues without waiting for approval. Tools like LangChain, CrewAI, or building directly on API calls with your own loop logic all get you there.

Third, you need failure handling. Long-running tasks fail. The API times out, the model produces something unexpected, a downstream tool returns an error. If your loop does not handle that gracefully, you end up with half-finished work and no way to recover. Build retries, checkpoints, and rollback logic from the start.

This is not simple work. But it is the work that separates teams that are genuinely ahead from teams that are waiting for a product update to save them.

Why This Matters for Your Business

The companies getting the most value from AI right now are not the ones using the best off-the-shelf tools. They are the ones who built internal systems early, learned from them, and kept iterating.

The gatekeeping is real. There is a pipeline of features sitting between the research lab and your dashboard, and the incumbents - the enterprise software companies, the agencies with enterprise contracts, the well-funded startups - get early access that the rest of the market does not. By the time a feature is in the standard product, the advantage has already been captured by someone else.

The only way to stay ahead of that dynamic is to stop waiting for the feature to arrive and start building the capability yourself.

That does not mean you need to hire a team of ML engineers. It means you need to understand what the tools can actually do under the hood, build a thin layer of orchestration on top of them, and start running real workflows against real problems.

The learning you get from six months of running your own agentic workflows is worth more than any product update that eventually reaches your dashboard.

The Window Is Open, But Not Forever

Right now, there is still a meaningful gap between businesses that are building with agentic AI and businesses that are using AI as a chat tool. That gap creates real competitive advantage.

That window closes as the tools get easier and the features get democratized. When anyone can spin up a reliable long-running agent with three clicks, the advantage disappears.

The time to build the internal knowledge and working systems is now, while building it still requires effort that most competitors will not bother with.

If you want to figure out where agentic AI actually fits in your business and what it would take to build something that runs without you babysitting it, Le Ventures offers a free audit. We will look at your current workflows, identify where autonomous AI creates the most leverage, and tell you honestly what it would take to get there. No sales pitch, just a real assessment.