AI Platform Shift - Leaders Must Know

For the last two years, the default answer to “which AI should we use?” was OpenAI. Not because anyone did a rigorous evaluation. Because it was the obvious choice - the name everyone knew, the one your competitors were already using, the one your vendors had already integrated.

That default is quietly shifting.

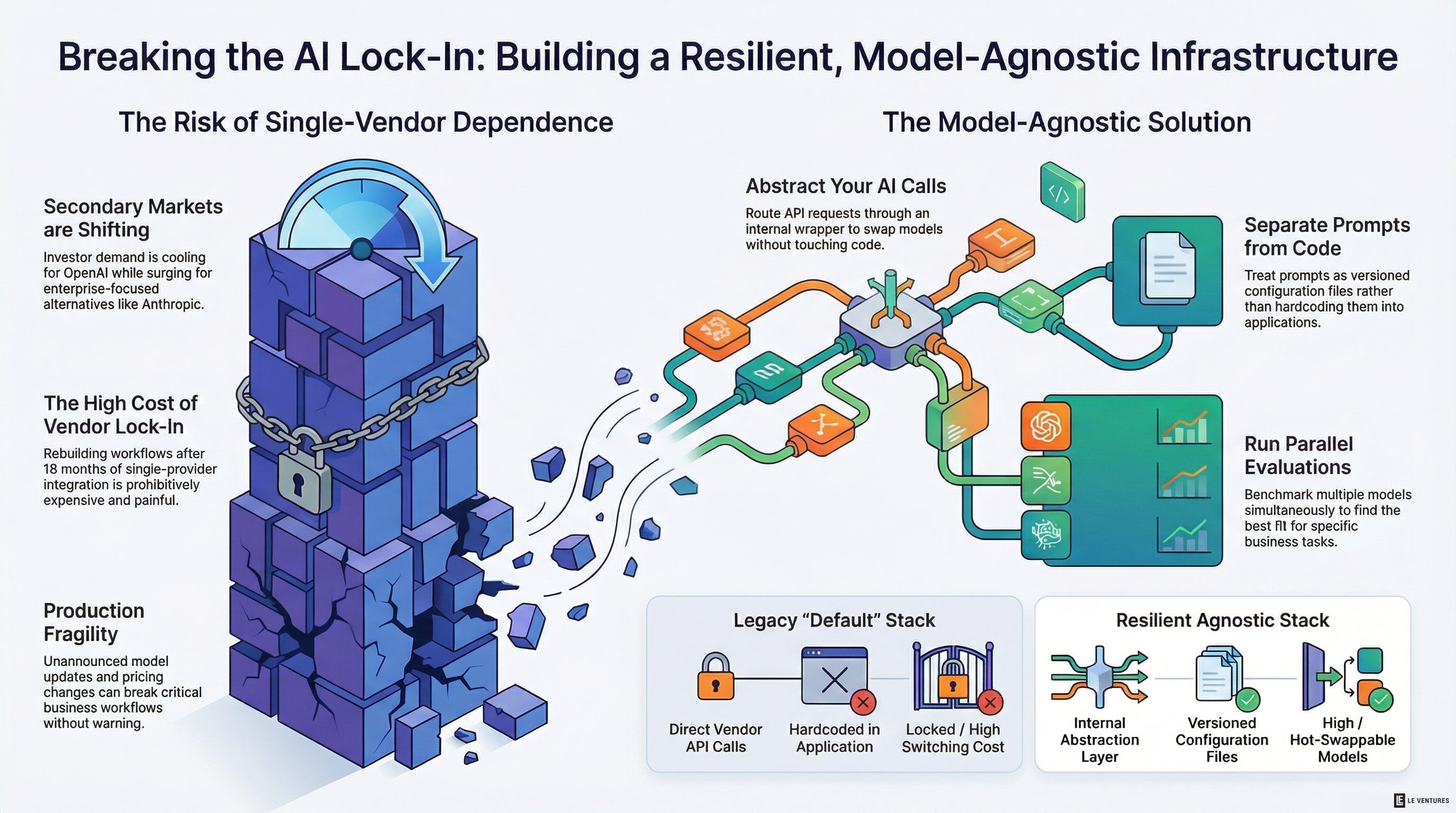

Secondary market data - where investors trade pre-IPO equity - is one of the clearest early signals of where enterprise confidence is moving. OpenAI demand has been cooling. Anthropic demand has been surging. This isn’t a prediction or an analyst opinion. It’s people with money on the line moving it around.

What does that mean for you, running a business that’s in the middle of building or scaling your AI infrastructure? It means the assumption that OpenAI is the safe, stable foundation for your AI stack deserves a hard second look.

Why Secondary Markets Matter Here

Secondary market signals aren’t about stock picking. They reflect forward-looking bets on who enterprise customers are going to trust, partner with, and spend money on over the next few years.

When enterprise investors shift their exposure from one AI vendor to another, they’re usually seeing early signs in procurement conversations, partnership pipelines, and customer feedback loops that haven’t surfaced publicly yet.

The Anthropic surge tracks with what we’re hearing from operations leaders: Claude is being evaluated more seriously for enterprise use cases, particularly ones involving long documents, compliance-sensitive workflows, and anything that needs reliable reasoning rather than flashy output.

This isn’t a “Claude is better than GPT-4” conversation. It’s a “the enterprise AI landscape is becoming genuinely competitive” conversation, and most businesses haven’t adjusted their strategy to account for that.

The Real Problem: You Probably Built on One Provider

Here’s the situation a lot of companies are in right now.

They picked a single AI vendor 12 to 18 months ago. They built workflows, automations, and internal tools on top of that provider’s API. They trained their team on that interface. They negotiated an enterprise contract. And now they’re locked in - not because of any formal agreement, but because rebuilding everything is painful and expensive.

That’s vendor lock-in, and it’s the actual risk here. Not that any one provider will fail tomorrow. But that:

- Pricing structures change (OpenAI has already repriced multiple times)

- Model behavior changes with updates (and it has - multiple times, sometimes in ways that broke production workflows)

- A new model from a competitor is meaningfully better for your specific use case, but you can’t easily switch

- Your provider has an outage during a critical business moment

None of these are hypothetical. All of them have happened to real businesses in the last 18 months.

What a Model-Agnostic Stack Actually Looks Like

Being model-agnostic doesn’t mean using every AI tool simultaneously. It means building your infrastructure so that swapping providers - or running multiple - doesn’t require tearing everything down.

Here’s what that looks like in practice:

Abstract your AI calls behind an internal layer. Instead of calling OpenAI’s API directly from your application code, route through a lightweight abstraction that can point to different models. Tools like LiteLLM, or a simple internal API wrapper, let you change the underlying model without touching your application logic.

Separate your prompts from your code. If your prompts are hardcoded in your application, changing models is a rewrite job. Treat prompts as configuration - versioned, stored separately, and testable. This also makes it easier to benchmark different models against each other.

Run a parallel evaluation before you commit. Before locking in a model for a major workflow, run the same prompts through two or three alternatives. Score the outputs on what actually matters for that task - accuracy, format consistency, latency, cost. You’ll often find that the “default” choice isn’t the best choice for your specific use case.

Build model-specific handling for critical workflows. Some models are better at structured outputs. Some are better at long document analysis. Some are faster and cheaper for simple classification tasks. A model-agnostic stack doesn’t mean treating all models as interchangeable - it means using the right model for each job and being able to change your mind later.

Track your actual spend and token usage by model. Most businesses can’t tell you which AI calls are costing them the most. If you can’t measure it, you can’t optimize it - and you definitely can’t make an informed decision about where to shift volume.

What to Do in the Next 90 Days

If you’re in the middle of building or scaling your AI infrastructure, here’s the practical sequence:

First, audit what you’ve already built. Document which providers are being called, from where, for what purpose, and at what cost. You probably have more exposure to a single provider than you realize.

Second, identify your highest-risk dependencies. Which workflows would break, or degrade significantly, if your primary AI vendor changed pricing, updated a model, or went down for four hours? Those are your priorities.

Third, test at least one alternative for your top three use cases. Not as a full migration - as an evaluation. Run the same inputs through Claude, Gemini, or whichever model makes sense, and compare the outputs on criteria that matter to your business.

Fourth, build the abstraction layer before you need it. It’s much cheaper to add this now than to retrofit it when you’re in the middle of a vendor crisis.

The Bigger Shift to Watch

The companies that will be in the best position 18 months from now aren’t the ones that picked the right provider today. They’re the ones that built AI infrastructure that isn’t brittle - that can adapt as the market evolves, as new models emerge, and as their own needs change.

The secondary market signal on OpenAI versus Anthropic is one data point. The real pattern is that the AI vendor landscape is competitive, it’s moving fast, and the era of one obvious default provider is probably over.

That’s not a problem if you’ve built for flexibility. It’s a serious problem if you’ve built for one provider and assumed that was enough.

If you’re not sure how exposed your business is to single-vendor AI risk, Le Ventures offers a free AI infrastructure audit. We’ll map what you’ve built, identify the brittleness, and give you a clear picture of what a more resilient stack would look like - without a sales pitch attached.