Long-Running AI Agents: What Changes for Your Business

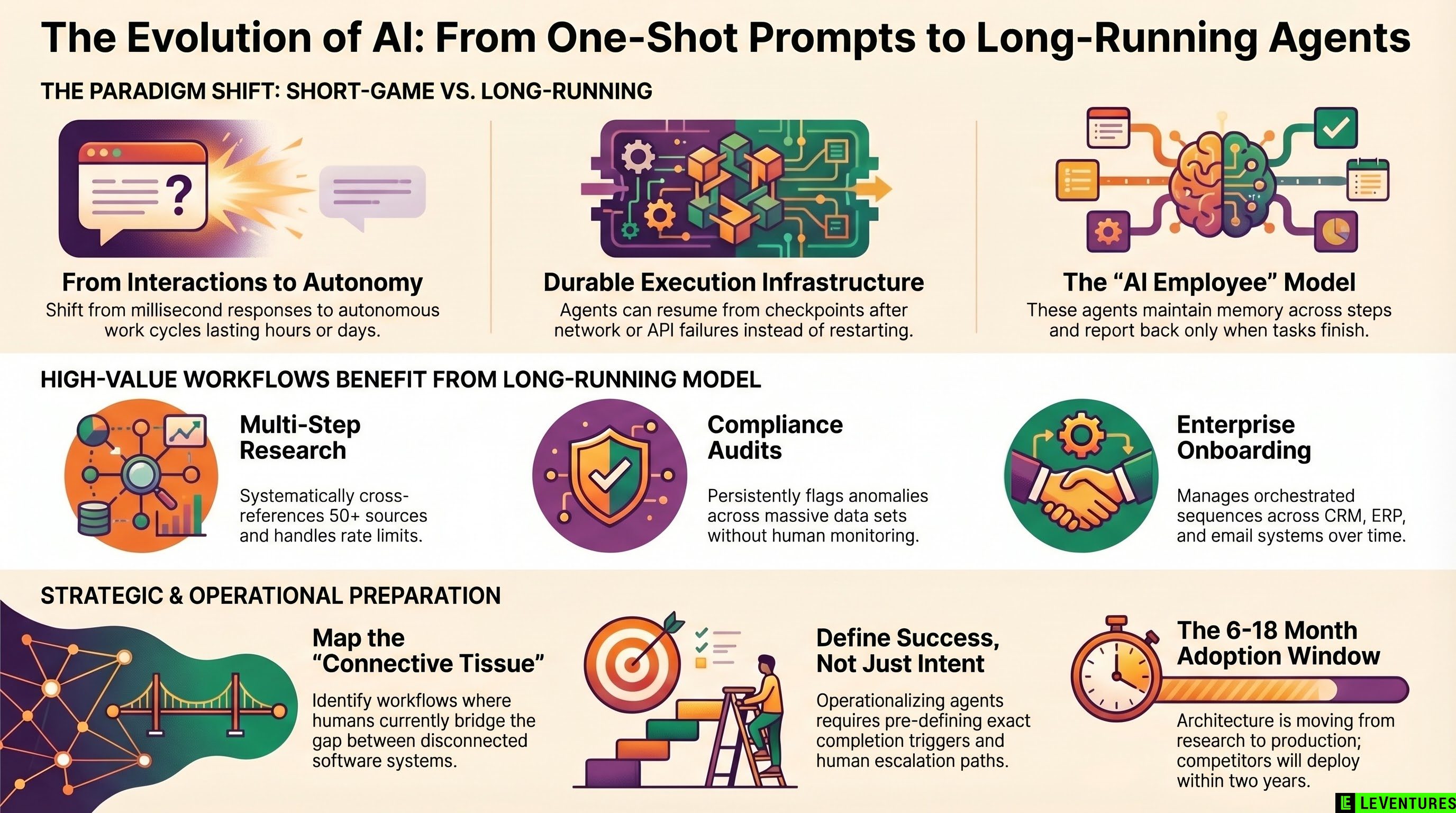

Most businesses using AI today are playing a very short game. You type something, the AI responds, you move on. That interaction model - prompt in, answer out - has been the default assumption since ChatGPT launched.

That assumption is about to break.

Anthropic just published their engineering approach to what they call Managed Agents: a hosted infrastructure layer designed specifically for AI agents that run for hours or days, not milliseconds. This is not a research paper. It is a production architecture decision, which means the technology is moving from “interesting concept” to “thing your competitors will deploy.”

Understanding what this shift means operationally is worth your time right now.

What “Long-Running” Actually Means

When people talk about AI agents, they usually picture something fast. Ask a question, get a plan, maybe run a few tool calls. Done in under a minute.

Long-running agents are different in kind, not just degree. They are designed to:

- Work autonomously over extended periods without human check-ins

- Maintain context and memory across many steps

- Pause, wait for external events, and resume

- Handle failures and retry logic without losing progress

- Report back only when there is something meaningful to report

Think less “AI assistant” and more “AI employee who is handling something while you sleep.”

The managed infrastructure piece matters here. One of the hard engineering problems with long-running tasks is what happens when the process crashes halfway through, or the network drops, or an API times out. Anthropic’s approach is to build durable execution into the service layer itself, so the agent can resume from a checkpoint rather than starting over. This is the same kind of reliability guarantee that makes things like bank transfers trustworthy.

The Workflows That Actually Benefit

Not every business process needs this. Some things should stay fast and synchronous. But there is a real category of work where the long-running model unlocks something genuinely new.

Multi-step research and analysis. Say you want competitive intelligence on 50 companies: financials, recent news, product changes, key hires. A one-shot prompt cannot do this well. A long-running agent can work through the list systematically, handle rate limits, cross-reference sources, and deliver a finished report.

Contract and document review pipelines. A law firm or procurement team processing hundreds of documents does not need each review in two seconds. They need reliable, complete processing with consistent output formatting. An agent that runs overnight and surfaces exceptions in the morning is more valuable than one that crashes or cuts corners under a tight timeout.

Customer onboarding workflows. Onboarding a new enterprise client involves pulling data from multiple systems, sending templated communications, flagging missing information, scheduling follow-ups, and updating CRM records. These are not instant tasks. They are orchestrated sequences that benefit from a persistent process managing the whole thing.

Supplier or vendor monitoring. An agent that watches for price changes, compliance updates, or risk signals across your vendor base - continuously, in the background - provides value that a one-shot query cannot match.

Internal audit and compliance checks. Running policy checks across large data sets, flagging anomalies, and generating summaries for review. This is exactly the kind of tedious, high-stakes work that benefits from an agent that takes its time and does it right.

What Changes Operationally

Deploying long-running agents is not just a technical upgrade. It changes how you think about workflow design.

You need to define completion, not just intent. With a chatbot, the conversation ends when the user stops talking. With a long-running agent, you need to specify: what does “done” look like? What does success look like versus partial success? What should trigger a human review? These are questions you need to answer before deployment, not after.

Error handling becomes a design requirement. A fast AI tool fails fast - you notice immediately and try again. An agent running overnight that fails at hour six with no notification is a different problem. You need to think about logging, alerting, and escalation paths as part of the initial design.

Trust and oversight need structure. The longer an agent runs without human checkpoints, the more important it is to have guardrails on what it can and cannot do. Managed agent infrastructure typically includes tools for setting permission boundaries, audit logs, and intervention points. Use them. Do not deploy an autonomous process with broad permissions and no oversight layer.

Integration is more complex. Long-running agents need to read from and write to your actual systems - CRM, ERP, email, documents. This is real integration work, not a demo. Budget for it accordingly.

The Window Is Short

Anthropic publishing this architecture signals a market shift. When infrastructure-level thinking moves from research to engineering blog, production adoption is 6-18 months behind. OpenAI is framing persistent agents as the next enterprise phase. The large consulting firms are already running pilots.

The businesses that benefit most from this will not be the ones who wait for a polished SaaS wrapper to show up. They will be the ones who start mapping their workflows now and understand which processes are worth automating with persistent agents versus which are fine as one-shot tools.

That mapping exercise is not glamorous, but it is the real work. What are your highest-volume, most repetitive multi-step processes? Where are humans currently acting as the connective tissue between systems that do not talk to each other? Where do you lose the most time to context-switching because a process requires multiple sessions over multiple days?

Those are your candidates.

Where to Start

If you want to start thinking practically about this, here is a simple framework:

-

List your longest running manual workflows. Anything that takes more than a day from start to finish and involves more than three systems is worth examining.

-

Identify the waiting time. A lot of multi-day processes are actually 2 hours of work spread across 5 days of waiting. Agents can compress that dramatically.

-

Map the decision points. Any step that requires genuine human judgment is a checkpoint. Everything else is potentially automatable.

-

Assess your data readiness. Long-running agents need clean inputs. If your data is messy or siloed, that is the first problem to solve.

-

Start with low-risk, high-frequency processes. Not your core revenue flow. Something adjacent - a reporting process, an onboarding checklist, a compliance review cycle.

The architectural shift from one-shot AI to persistent agents is real and it is happening fast. The question is not whether your industry will use this - it will. The question is whether you understand it well enough to deploy it on your terms rather than scrambling to catch up.

If you are not sure where AI can actually move the needle in your operations, Le Ventures offers a free audit that maps your workflows to the right tools - including where persistent agents make sense versus where simpler solutions do the job. No pitch, just a clear picture of where you stand.