Stop Chasing AI Updates

You didn’t hire an AI team. But if you’ve been adopting tools aggressively over the past two years, you might accidentally have one.

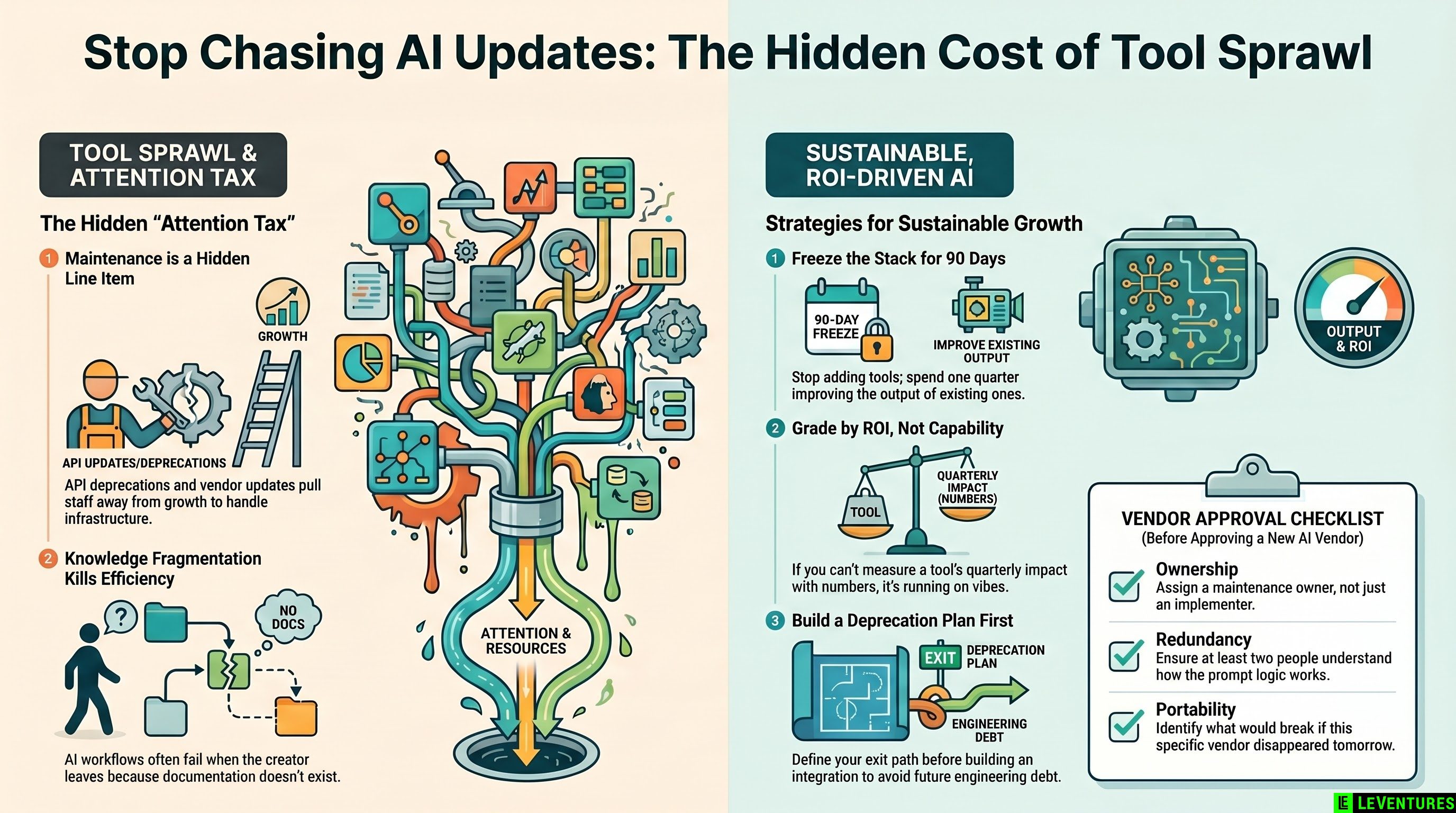

Every model upgrade, every API deprecation notice, every “exciting new feature” email from a vendor pulls someone on your team away from actual work. Multiply that by five or six tools, add a couple of custom integrations, and you’ve quietly built a maintenance operation nobody budgeted for.

The Hidden Workforce You’re Already Paying For

Most companies don’t track AI maintenance as a line item. They should.

When GPT-4 Turbo got deprecated, teams using it scrambled to test replacements. When Anthropic shifted context window pricing, someone had to audit usage. When a workflow tool dropped a feature mid-quarter, an ops person spent two days rebuilding the prompt chain.

None of that shows up as “AI cost.” It shows up as project delays, stretched engineers, and senior staff context-switching into infrastructure problems instead of growth problems.

The real cost isn’t the subscription. It’s the attention tax.

Contract Sprawl Is a Trap

Here’s how it usually goes. One team adopts a writing tool. Another grabs a meeting summarizer. A third builds a custom integration with a model API. Now you have seven vendors, three contract renewal dates, two data processing agreements you haven’t fully read, and zero visibility into which tools are actually moving the needle.

The vendor lock-in paradox makes it worse. Each tool promises flexibility, but every integration you build - every prompt you fine-tune, every workflow you wire up - is another anchor. Switching costs compound quietly until you’re effectively stuck.

Before you add another tool, map what you already have. Who owns each contract. What each tool actually outputs. What would break if it disappeared tomorrow.

Knowledge Fragmentation Is the Quiet Killer

When the person who built your AI workflow leaves, what’s left? Usually a Notion page nobody updates and an integration that half-works.

AI systems need institutional knowledge to maintain. Which prompts handle which edge cases. Why a certain output format was chosen. What the fallback is when the API is slow. That knowledge lives in people, not documentation - and it walks out the door.

This is why companies that treat AI as a series of one-time implementations end up rebuilding from scratch every 18 months.

How to Slow Down Intentionally

The goal isn’t to stop adopting AI. It’s to stop adopting it faster than you can absorb it.

A few practical rules that actually work:

Freeze the stack for 90 days. Pick a period where you improve what you have instead of adding more. Measure actual output quality, not feature availability.

Grade every tool by ROI, not capability. The question isn’t “what can this do?” It’s “what did it actually do for us last quarter?” If you can’t answer that with a number, the tool is running on vibes.

Build a deprecation plan before you build the integration. When a vendor discontinues a model or changes pricing, what’s your path out? If the answer is “we’ll figure it out,” that’s future engineering debt today.

Assign a maintenance owner, not just an implementer. Whoever builds the integration shouldn’t be the only person who understands it. Redundancy in knowledge is cheaper than scrambling after turnover.

Treat model upgrades like software upgrades. Not every update needs to be applied immediately. Evaluate them on a schedule, not whenever the vendor sends a notification.

The Companies That Win Are Not the Fastest Adopters

They’re the ones who run fewer tools better. Who can tell you exactly what their AI stack costs, what it produces, and what they’d cut if they had to.

The AI tool graveyard is full of promising integrations that nobody maintained, contracts that auto-renewed for tools nobody used, and custom builds that became single points of failure.

The edge isn’t moving fast. It’s knowing when to stop.

If you’re not sure whether your current AI stack is working for you or against you, Le Ventures offers a free audit to help you find out.